Why AI-powered vulnerability discovery and software exploitation don’t change the fundamentals of durable defense.

AI is going to make it faster and easier to find vulnerabilities and exploit them. Many advanced models including Claude Mythos, trained on code, CVEs, and exploitation tradecraft, will compress the time between vulnerability discovery and weaponization. This is real, and it deserves serious attention. But before we catastrophize, we should anchor to a few stubborn truths.

We’ve always had more malware than detection logic

Signature-based detection was always a losing race. Malware variants and malicious artifacts have outnumbered signatures for as long as both have existed. Behavioral detection improved the math considerably, and for organizations that invest in depth of coverage, it casts a net that most adversaries, human or AI-driven, will struggle to avoid. Still, the gap between what adversaries produce and what defenders detect has always been nonzero. AI widens this gap by lowering the cost for adversaries to scale the production side of the equation, but AI doesn’t fundamentally change its structure.

All software is subject to the laws of physics. An exploit has to be delivered, and if it lands, it has to be followed by more moves. Every one of those moves takes place on hardware, makes system calls, and touches memory, disk, or the network in ways that are observable. An exploit may subvert a given control or suppress an expected behavior at a given stage of the attack chain, but it doesn’t exempt the target system from the constraints, controls, and observability built into the environment.

An exploit unlocks a door, but even chained exploits orchestrated by AI agents are not a skeleton key.

What defenders who are winning actually do

The organizations that are well-positioned today didn’t get there by chasing every new threat category. They got there by making sound architectural decisions and disciplined operational choices:

Minimize attack surface first. Rather than trying to defend everything equally, force activity through a small number of well-understood, well-defended, carefully monitored pathways. This isn’t glamorous, but it is foundational.

Implement zero trust and segmentation principles throughout. Require a combination of device and identity trust as a prerequisite for transactions, enforced at as many layers as practical. An adversary who gains a foothold still has to make moves—segmentation and conditional access make movement impossible, or at minimum, noisy and observable.

Use deception to backstop other defensive controls. Honeytokens, decoy assets, and deceptive infrastructure have two defining characteristics: legitimate users don’t trigger them, and they are exceptionally inexpensive to deploy. Any interaction is, by definition, suspicious. In a world of high alert volume and limited analyst time, that kind of signal is invaluable.

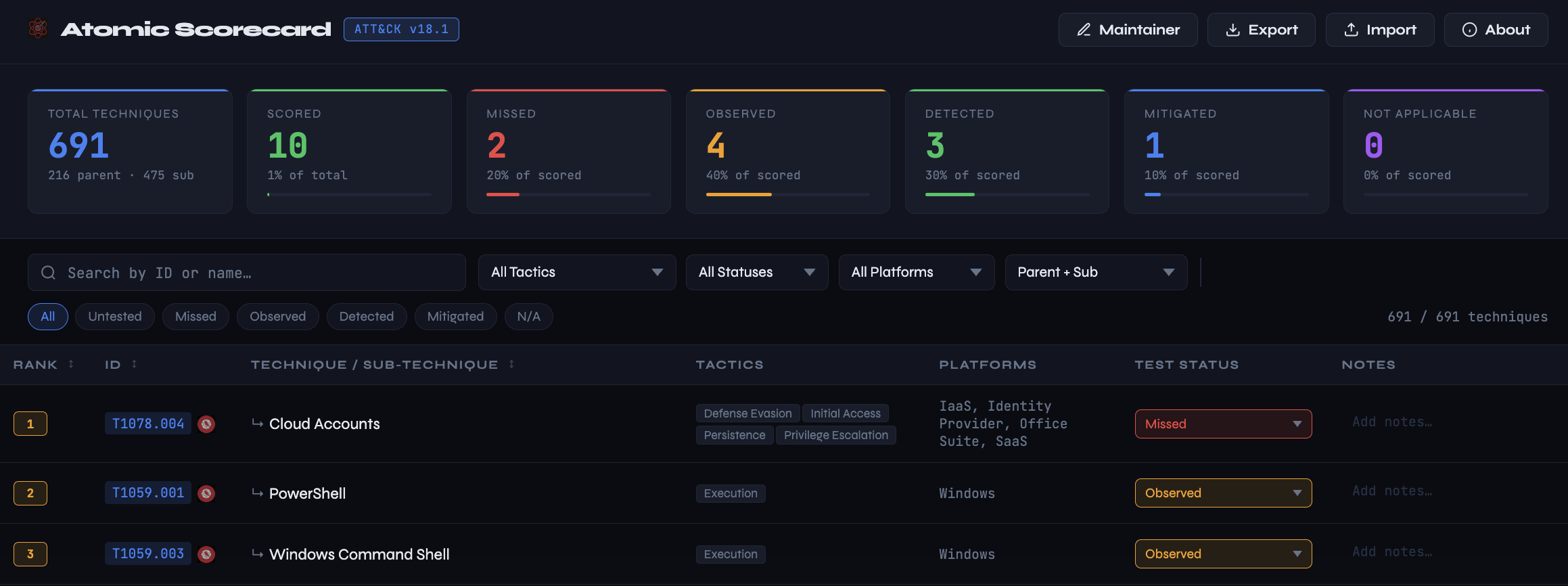

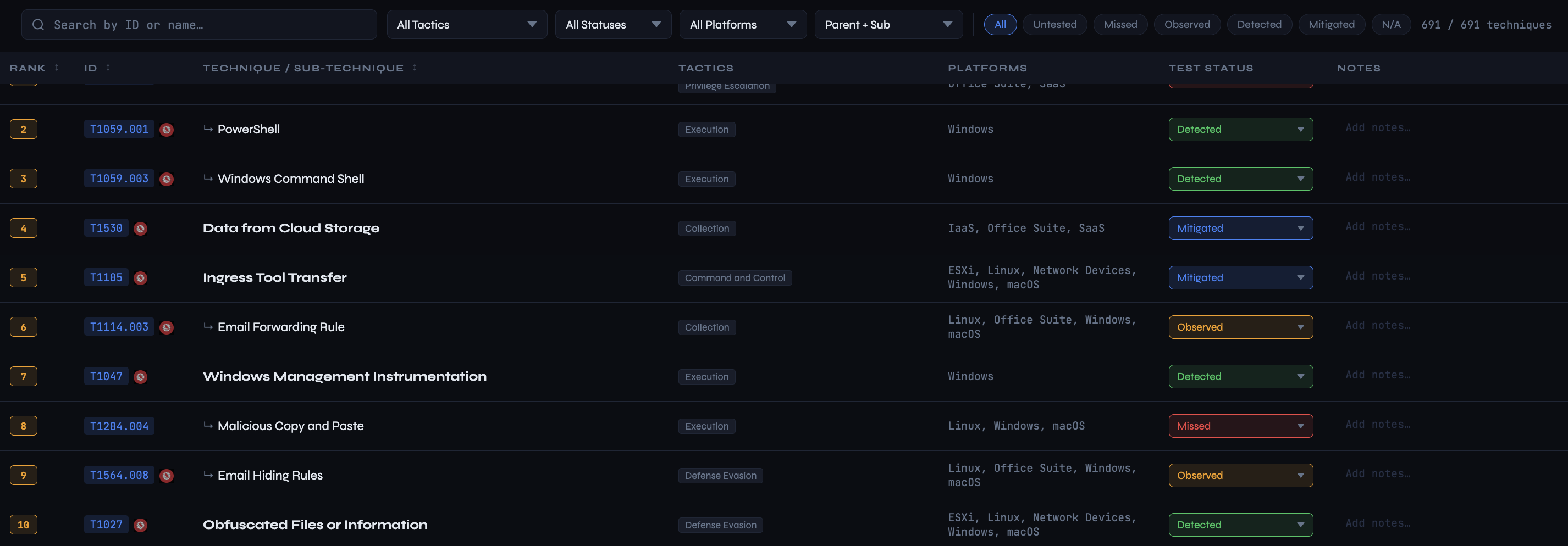

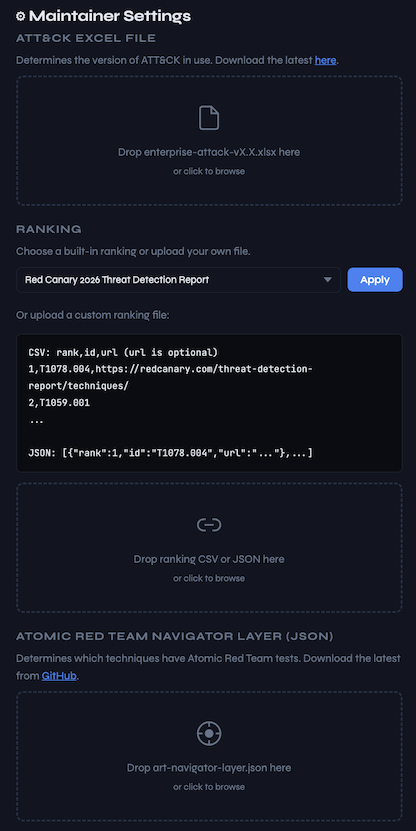

Apply intelligence-led, behavior-based detection and response. The set of adversary techniques that appear in the majority of real-world intrusions is not large. From Red Canary’s 2026 Threat Detection Report:

[O]ver the last five years, we’ve detected at least one of the 10 most prevalent techniques in 46 percent of all detections. Over the same time period, we detected at least one of the top 20 techniques in 63 percent of detections.

The defenders who are winning have optimized for the set of prevalent techniques that almost all adversaries use, building detection coverage against it, investing in rapid investigation workflows, and standing up response capabilities that can act decisively when a threat is confirmed.

The volume problem is real, but also solvable

Attack volume will increase. There is no serious argument against that. More actors with access to more capable tools will generate more exploits and malware variants, more intrusion attempts, more noise.

Defenders who are well-positioned today will still be well-positioned tomorrow. Not because nothing is changing, but because the principles that make a defense durable—attack surface reduction, zero trust principles, high-fidelity signals, and behavioral detection—become considerably more important in the face of increased adversary volume, speed, and efficacy.

The clock has gotten faster. Time-to-detect, time-to-investigate, and time-to-respond all need to come down. AI agents and emerging automation are well suited for exactly this: triage, investigation acceleration, and response orchestration are tractable problems, and the tools are improving quickly.

The question isn’t whether AI changes the threat. It does. The question is whether it changes the fundamental structure of the problem for defenders who are doing the right things. And I don’t believe it does.