Cybersecurity predictions, Q3 2024 edition

Anyone who’s worked in cybersecurity for a meaningful amount of time has been asked to make predictions. Here are two predictions I’ve made that have endured over the past 2-3 years.

MSSP, MDR, and all other defensive managed services coalesce into SOC-as-a-Service.

All of the historical managed security service market categories are dancing around this eventuality.

The simplest way to think about a security operations center, or SOC, is as a vertically integrated set of functions whose primary reason for being is to detect and respond to cybersecurity threats. And Incident Management 101 tells us that in order to be effective at detection and response, you need to understand where your incidents come from (threat vectors) and corresponding attack paths (root causes—there is rarely just one).

Traditional managed security services focus almost exclusively on detection and response. This is great, as it’s exceptionally difficult to be exceptional at detection and response. But the smart money is on maximizing what you learn from your incidents and making decisive changes to eliminate threat vectors, reduce attack surface, and ultimately to significantly reduce the cost to adversaries who want to meddle in your environment.

It’s overwhelming to think about the dozens of cybersecurity market categories that one could Frankenstein together as bookends for detection and response to build a highly effective SOC. Is it attack surface management (ASM), vulnerability management (VM), exposure management, cloud security posture management (CSPM), incident response (IR)? Very few organizations need any one of these functions turned up to 11. What most organizations need is a subset of these functions working exceptionally well together.

So again, the destination here is a vertically integrated and highly operationally-focused slice of some of these functions, not just detecting and responding to threats, but systematically working to reduce the number and severity of incidents, effectively and at scale.

The browser becomes the most important device of all.

We love to say things like “identity is the new perimeter.” This statement may be accurate, and helpful for convincing people that they should invest heavily in protecting identity. But, it can lead to superficial implementations that fixate on the identity provider, or IdP, and leave us with gaps in our understanding of what’s being done to circumvent obvious points of identity protection, and what happens once a session exists.

Trust is increasingly pinned to the browser. It’s how we access the IdP in the first place, and it’s where any modern organization does 99% of their work after authenticating via the IdP.

Application control, including application whitelisting or allow lists and behavioral controls, are hallmarks of great endpoint protection. These are available for the browser, but are clunky to manage and far from mainstream in the enterprise. Ad and script blockers are absolutely critical to protecting end users from all manner of shenanigans, but both of these spaces feel like moving targets—purveyors of the most popular browsers don’t like them and these interdictions can be at odds with interoperability and ease-of-use.

Soon, we’ll see widespread acceptance of the importance of the browser, and it’ll become commonplace to instrument and think about browser protection, integrity, and observability in the same ways that we’ve come to think about these concepts on traditional end user platforms, like macOS and Windows (Linux, too, I know there are dozens of you!).

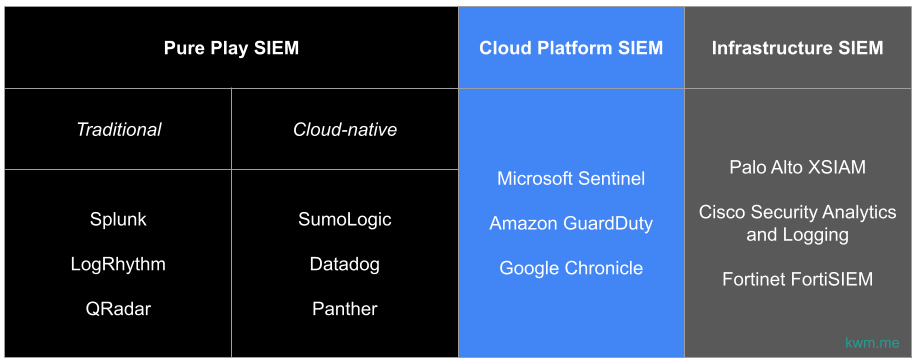

Above graphic is illustrative, not comprehensive.

Above graphic is illustrative, not comprehensive.